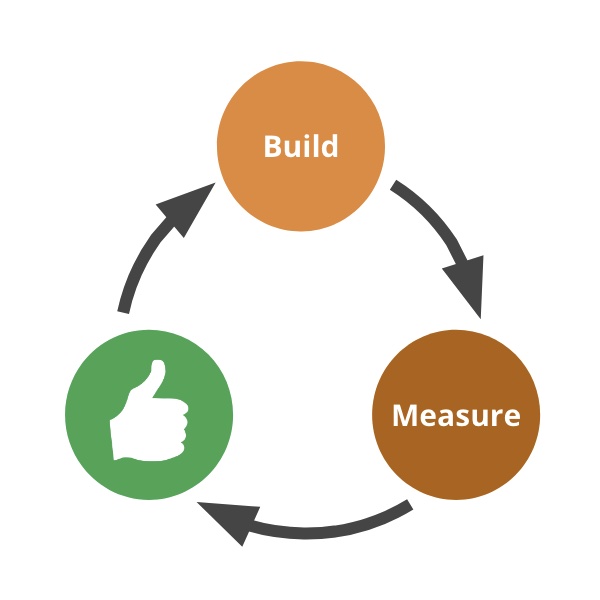

Experimentation is becoming more commonplace, but this positive development is often hampered by a common problem. I see company after company testing product ideas with users and customers, collecting the results, but then failing to take meaningful action — no parking of ideas or pivoting. I call this the Build-Measure-LooksGood! loop.

Why is this happening? Old-school mindsets push us to believe that we don’t have anything significant to learn, or that the decision of the HiPPO or committee is always right. Pressure to launch demotivates stopping to think and changing the plans. Pushing forward with the idea is easiest path forward, so we find creative ways to disregard or subvert the results.

Being critical of test results and using judgment is an important part of the process. But that’s not the same as forcing the results to conform to our beliefs. Doing the latter subverts experimentation and turns it into a meaningless, often political exercise.

In this article I’ll go over some of the common rationales you may hear, and suggest ways how to deal with them.

The results are positive!

This statement is the bane of user researchers and data scientists the world over. An optimistic and overly-confident executive or product manager will find a way to spin the results in the most positive way possible, turning the interpretation into a battle of opinions, in which the more senior or influential person usually wins. One common technique is to focus only on the good news, and to downplay the bad. Another is to move the goalposts, claiming that these results are exactly what we expected.

What to do:

- Define success in advance — as part of the planning of the experiment we should call out clearly what we aim to measure, and what would constitute success. You can use hypotheses, or any other format. Be aware that it’s sometimes challenging to predict what’s possible and some adjustment in light of the results is normal and healthy. Having said that, if you find yourself significantly lowering the bar post- test you’re likely just giving yourself a free prize.

- Buildup user researchers and data analysts as experts in results interpretation.

- During the analysis bring in outside people who have experience in testing and can offer an unbiased opinion.

- Move from a binary definition of success/failure to a more nuanced ICE analysis: what do we think the Impact and Ease of this idea are in light of the results? What’s our level of Confidence? Here’s a full real-world example.

We Tested With the Wrong People/Companies

This of course may be true, and if it is we should disregard the results and rerun the test. Otherwise it’s just another way to move the goalposts and claim false success.

What to do: It’s important to identify the target market segment or user persona long before we start building and testing — ideally at the goals stage. This makes it easier to recruit the right type of participants. It’s okay to change target markets given new information, but not every time it suits our needs.

Join thousands of of product people who receive my newsletter to get articles like this (plus eBooks, templates and other resources) in your inbox.

Some Users Are Loving It!

I once worked with a startup that used a variety of research techniques to test the product it was developing. The results were consistent: most target users didn’t find much value in the product and didn’t retain, but there was a small group of power-users that were loving it and stayed very engaged. This signal kept throwing the team off, making it believe it’s on to a solid idea that just needs a better user interface. After multiple quarters of UI iterations it became clear that the mainstream users will never adopt this product, and the team pivoted to a better idea, but by that time they had burnt too much cash and had to shut down.

Having some people react positively may be a sign that we’re on the right track, but it may also be just a weak signal. Whatever we develop, someone somewhere is bound to love it. These folks might just as easily fall in love with something else and dump our solution. We have to prove to ourselves that there’s a sufficient real market beyond the early adopters.

What to do: delve deep into the differences between the users/customers that love the product and everyone else. Then ask whether you should narrow your target market just to these folks, pivot your idea to make it more broadly useful, or move on to the next idea. For a practical example see this article by Superhuman.

The Experiment Biased The Results

This is definitely possible and even quite common. If that’s the case we should fix the test and rerun it. If it’s not the case, it’s just a way of throwing mud at the experiment (and the people who conducted it) as a way to protect your pet idea.

What to do:

- Review the test methodology thoroughly ahead of time with a user researcher, a data analyst, or other experts.

- (Optional) Conduct a few test runs and adjust before going full-on.

- Analyze the results thoroughly. If anything doesn’t make sense, look for potential biases introduced by the test, or into bad data. If found, dump the results, and re-run.

We learn in-depth how to implement evidence-based product discovery in my Lean Product Management courses. Secure your ticket for the next public workshop or contact me to organize an in-house workshop for your team. Learn more at itamargiladcom/workshops.

The Results Are Inconclusive — Let’s Proceed

Showing no measurable improvement, or seeing conflicting results is actually the outcome of most tests. Most product ideas simply don’t create any positive change, yet every idea brings some costs and risks. The default conclusion in this case ought to be to park the idea, or to pivot and retest it.

What to do: establish a rule of thumb that an idea is wrong until proven otherwise.

Our Type Of Product/Market Cannot Be Truly Tested

This is yet another way to disregard unfavorable results: in our special case the only reliable test is a full launch. In my experience this statement is practically never true. Some products — enterprise B2B, hardware, regulated industries — are harder to test, but with creativity and patience they’re definitely testable (see my eBook Testing Product Ideas for suggestions). In any case this is feedback that should come before the team invests time and effort in testing.

What to do: start by agreeing that experimentation and learning are both necessary and possible in our company. Discuss the test methodology in advance and solicit feedback before you run the test. Try to get everyone to agree that this is indeed a valid approach, or at least build a sufficiently large consensus to support you when the results come in.

This Idea Was Already Confirmed Through Surveys, Internal Reviews, Customer Requests, Competitor Analysis…

These are all weak forms of evidence that are enough only for the smallest and least risky ideas. For everything else we must find stronger evidence.

What to do: use the Confidence Meter during analysis.

We’ve Already Invested Too Much Time And Effort To Change

Throwing good money after bad (the Sunk Cost fallacy) is unfortunately very common in product development. The better approach is to cut our losses by either pivoting or parking bad ideas, no matter at what stage.

We’re Weeks Away From Launching

Doesn’t matter. Even if fully launched, the right thing to do with a bad idea is to unlaunch it. A bad feature is like a bad stock investment — it will keep costing us for years.

We Should Just Launch And See

A full launch is the most expensive and risky way to learn — essentially testing with 100% of your users. It’s also the hardest to fix, and the one we’re least likely to want to pursue.

Bottom Line: Stick to the Rules

In my early days in software development (mid-90s and early 2000s) we used to slap “beta-release” at the end of every project. It was just another box in the project checklist, and we saw the beta as an opportunity to uncover some hidden bugs. The project was already approved quarters or years before, and the specification and design were set in stone. The opinions of the users didn’t really matter at this point.

Companies who wish to build products like Amazon, Google or AirBnB, have to do away with this archaic mindset. Experimentation isn’t just another process we add to the development cycle, it’s a complete shift in how we develop products. We have to be much more humble and curious, and to let the evidence guide us (as usual, Amazon has an internal meme: We go where the data leads us). Changing the rules of experimentation when it suits us is neither fair nor helpful. Embracing the evidence and making tough decisions is the only way to reap the rewards — high-impact features and products.